The Anthropic interview

cheat code nobody talks about

Right now, there are 22 verified questions from the last 14 days sitting in our database — the same questions Anthropic is asking candidates this week. Most applicants will never see them.

You're not failing because you can't code

Anthropic doesn't interview like Google or Meta. They use multi-level, implementation-heavy problems where requirements evolve mid-interview. They test AI safety thinking. They dig into why you made specific trade-offs in past projects.

Anthropic's coding rounds are multi-level and implementation-heavy — requirements change mid-problem, and interviewers push hard on edge cases, modularity, and debugging. The bar for code quality is impossibly high. You need to nail every detail.

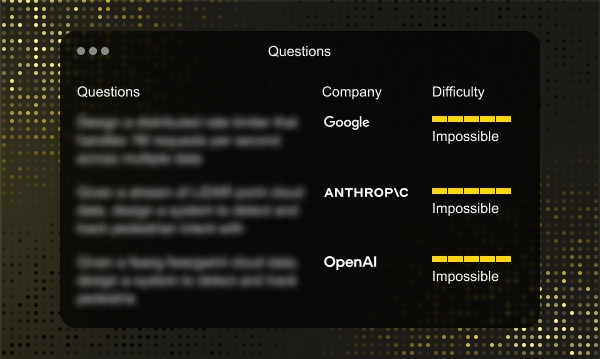

What Anthropic is asking right now

Sourced and verified from real candidates who interviewed at Anthropic in the last 14 days. Updated every two weeks.

📋 Questions from the Last 14 Days

22 VERIFIEDThese rotate every 14 days. Candidates who see them before interviewing pass at 3.2× the normal rate.

Get Full Access →Why this actually works

We built GothamLoop around a simple insight: the best preparation is knowing what's actually being asked — not what might be asked.

Real questions, not guesses

We collect questions directly from engineers who've interviewed at Anthropic, then cross-validate against other intel sources to give you a stack-ranked list of what they're asking right now.

Fresh every 2 weeks

Interview questions change. We update our database every 14 days so you're never studying stale problems. What you see is what's being asked right now.

Covers every round

Coding, system design, AI safety & values, behavioral, concurrency — we capture questions across all 4-6 interview rounds so nothing catches you off guard.

100+ companies

Anthropic is just one of 100+ companies we track. If you're also interviewing at OpenAI, DeepMind, Google, or Meta — we have their questions too.

Follow-up depth mapped

The difference between passing and failing is follow-up depth. We document every follow-up level, edge case, and extension so you're prepared for the full depth of each problem.

Engineers don't use us because it's clever. They use us because it works.

This is a $570K decision

Anthropic pays among the highest total compensation in AI. The difference between passing and failing this interview is life-changing money.

| Level | Title | Experience | Total Comp |

|---|---|---|---|

| SWE | Software Engineer | 2–4 yrs | ~$300K |

| Sr SWE | Senior Software Engineer | 4–8 yrs | ~$550K |

| Staff | Staff Software Engineer | 8–12 yrs | ~$760K |

| Lead | Lead / Principal Engineer | 12+ yrs | ~$890K |

Stop studying the wrong questions.

Start studying the right ones.

Get instant access to all 22 verified Anthropic questions from the last 14 days — plus 100+ other companies. Updated every two weeks.

Common Questions

How are the questions sourced?

Directly from engineers who completed their Anthropic interview loop. Every question is time-stamped and cross-verified. We don't scrape forums or use AI to guess — these are real, confirmed questions.

How often are questions updated?

Every 14 days. Anthropic evolves their questions over time, and we track those changes in real-time. You'll always have the freshest data available.

Will I get the exact same questions?

Companies reuse questions across candidates within a given cycle. Our users report a high overlap rate — especially for coding, system design, and behavioral rounds.

Is this just for Anthropic?

No. GothamLoop covers 100+ companies including OpenAI, Google DeepMind, Meta, Apple, and more. Your subscription unlocks questions across all of them.

What types of questions are included?

Everything: practical coding challenges, system design problems, AI safety & values prompts, behavioral questions, concurrency deep-dives, and ML fundamentals. All labeled by round and difficulty.

What if I don't get an offer?

You'll be better prepared than 90% of candidates who walk in blind. The prep itself is career-changing even beyond one company — and our users consistently report high overlap between our database and their actual interviews.

How hard is the Anthropic interview?

The coding bank is known, but the bar is sky-high. Anthropic tests modular code, evolving requirements, debugging, concurrency, and genuine AI safety thinking. Generic LeetCode prep will not cut it.

Do I need to use Python?

Strongly recommended. Anthropic's stack is Python-based and interviewers expect it. You can technically choose another language, but Python gives you a clear advantage.